- A

- A

- A

- ABC

- ABC

- ABC

- А

- А

- А

- А

- А

Assessment of Universal Competences

ILLUSTRATIONS, GRAPHS, AND INFOGRAPHICS BY DARIA DELONE

In order to remain competitive in the labour market, university graduates must be proficient not only in professional knowledge and skills, but also in a set of universal competences (UC). These include critical thinking, digital and financial literacy, creativity, initiative, and others. However, higher education systems face problems in assessing such competences due to a lack of developed approaches and methodologies. A report released by the HSE Institute of Education, ‘An Assessment of Universal Competences as Higher Education Learning Outcomes’, analyses the ways in which these challenges have been addressed in both Russia and abroad. Institute researchers presented the report at the XXII April International Academic Conference on Economic and Social Development, organised jointly by HSE University and Sberbank.

Universal Competences Are the Key to Successful Adaptation in Today’s World

The concept of universal competences in the academic sphere is rather vague. However, in Russia they are included in the list of Federal State Educational Standards (FSES), where they are understood as competencies that contribute to achieving success in a wide range of professional activities.

The report was prepared by the world-class research centre ‘The Human Capital Multidisciplinary Research Centre’.

Globally, both in research and in practice, these competences are denoted by different terms, depending on the context of use. In the corporate environment, the term ‘ soft skills’ is more commonly used in contrast to hard skills — the knowledge and skills required to implement professional tasks in a particular workplace. In education, the term '21st century skills' or 'higher order thinking skills' is more commonly used. They refer to a set of skills required for successful adaptation in the modern world, not just in the labour market.

The core competencies, recognised by all major players, include critical and creative thinking, project management skills, teamwork, communication and effective collaboration, and self-organisation and self-development. The list of core competencies adopted by the European Commission includes: problem solving, communication, creativity, and initiative.

A number of Russian universities not only affirm the need to adopt UC, but also consistently implement relevant programmes. These universities include HSE University (which has introduced courses in data culture, entrepreneurship, soft skills development), Far Eastern Federal University (which offers training to develop soft skills, including leadership, teamwork, time management, critical thinking, etc.), Kazan Federal University (which established the Universum+ Competence Development Centre), ITMO University, and others.

All of these competencies, as the authors of the report point out, belong to 'complex constructs', each of which can also be divided into components. In critical thinking, for example, the most commonly identified sub-components are analysis, synthesis and causal reasoning. They, in turn, are also divided into smaller units. The competence of teamwork is related to communication skills (reception and transmission of information), self-regulation, and goal setting (setting group goals, prioritisation, role allocation).

International Experience in Assessing Universal Competences

Competencies, like complex constructs, require a specific approach to modelling and measurement. This is the challenge facing research teams in different countries today.

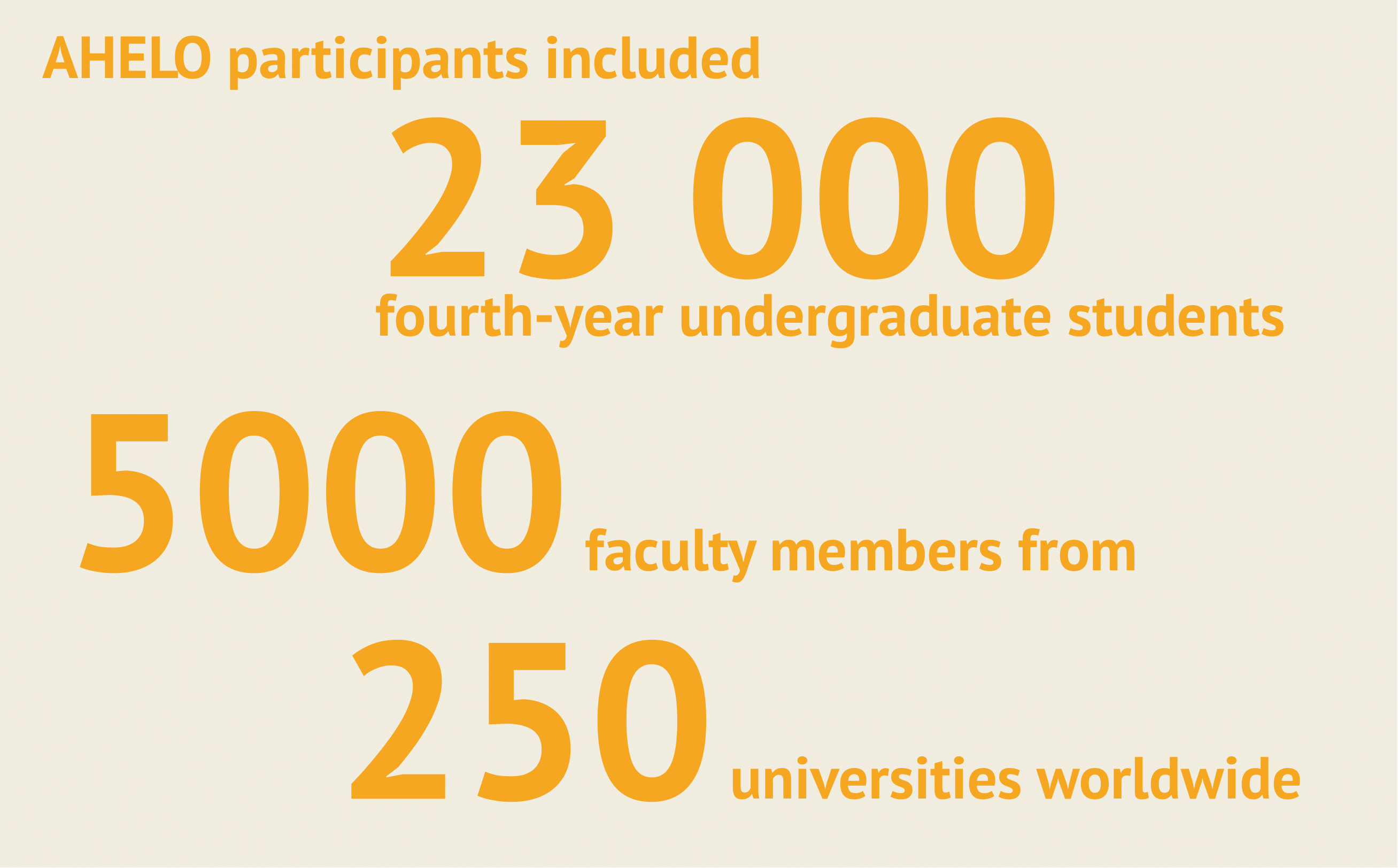

The procedures associated with the Bologna Process of 2000 were the impetus for developing standardised assessment systems at national levels for both subject and non-subject higher education outcomes. Such international projects include iPAL (Performance Assessment of Learning in Higher Education) and AHELO (Assessment of Higher Education Learning Outcomes).

Most of them include both professional and universal competence assessments. They involve a large number of participants from different countries.

But the project, as the author notes, has not been fully implemented. The main problem is that the organisers have failed to create reliable tools that take into account linguistic, cross-cultural, and inter-university differences. Nevertheless, the popularity of the project in many OECD countries reflects the growing desire to establish international standards and metrics to assess undergraduate student competencies, and especially universal competencies.

To measure UC constructs, standard assessment methods are not appropriate. It is better to use an alternative type of tool where the task model describes continuous, time-consuming activities rather than their individual components. For example, these are tools based on scenario-based tasks, games, and simulations.

There are advanced methodological techniques for developing such instruments. One of these is Evidence-Centred Design or the 'systematic approach to the development of performance measurement tools' (ECD).

ECD includes the following components:

- Operational definition of the construct — the content to be measured by a specific tool.

- Description of the structure of what will be assessed through the respondents' actions.

- Formation of an assignment model: defining specific actions to be taken by the test subject.

- Development of a scoring system and measurement model.

The advantage of this methodology is that it builds the logic of tool development, from design concept to observed behaviour.

Information and Communication Competence at School

The ECD methodology is also used in primary and secondary school education. In Russia, instruments have been developed within the framework of this methodology at HSE University. They measure such parameters as information and communication competence (IC-competence), 4C (creativity, critical thinking, communication and cooperation), and digital literacy.

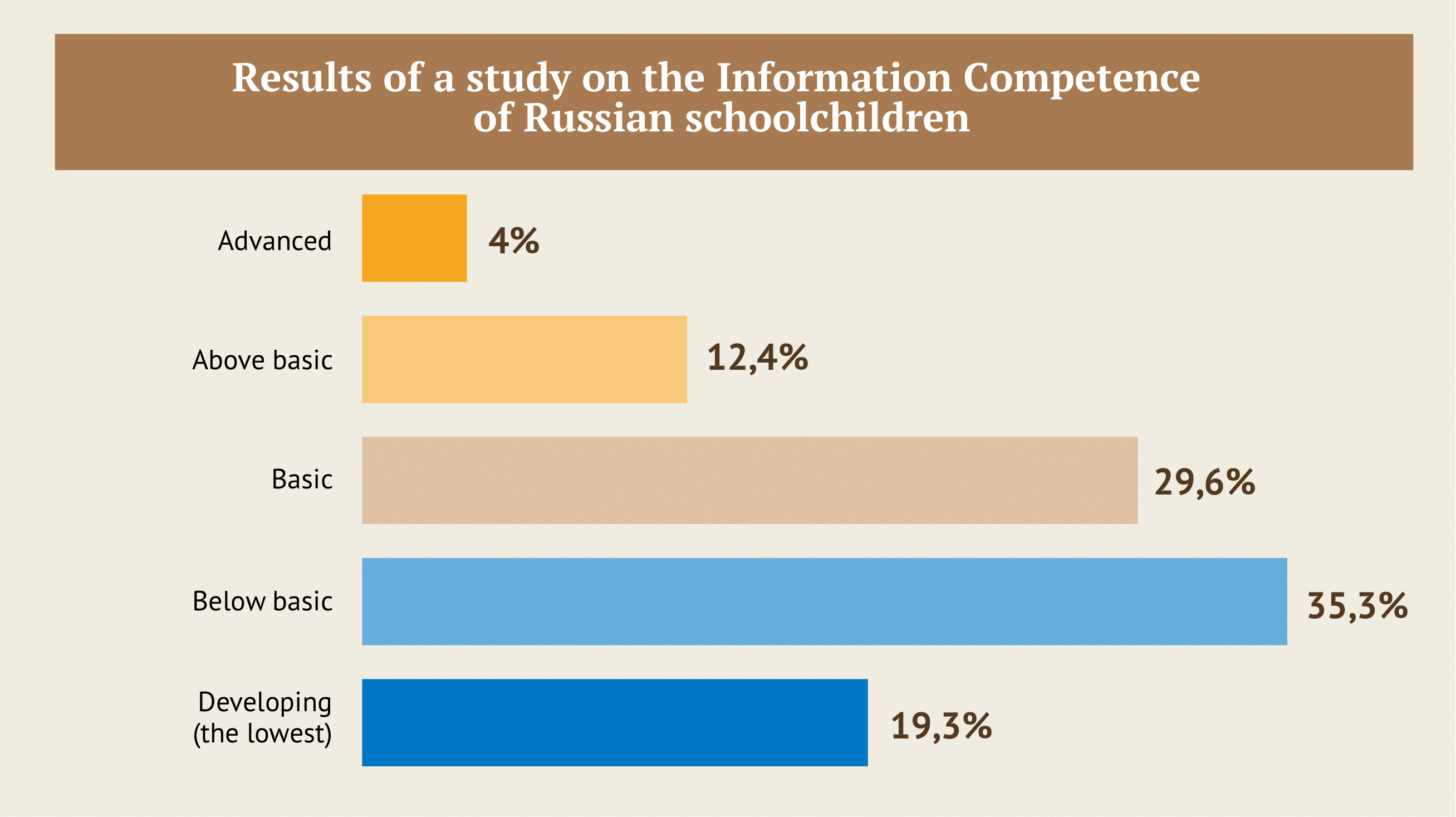

The Information Competence (IC) test assesses a primary school leaver’s ability to use computers and other forms of modern technology to acquire new knowledge, communicate, and do research. The testing is aimed at 13-14 year old pupils. A total of five levels of IC are distinguished: advanced, above basic, basic, below basic and developing.

In 2020, a monitoring survey was conducted among 9th-year pupils in schools from 21 regions of Russia. More than 30,000 pupils participated.

The results showed that among students, just under a third (29.6%) have a basic level of ICT competency, 12.4% of students are above the basic level, and just over 4% are at the advanced level. ‘These are the basic school graduates who will have no difficulty being prepared for lifelong learning and will be prepared for life in the information and digital society’, the report says. The remaining pupils with below basic level (35.3%) and developing (19.3%) scores need support to develop digital information skills.

Critical thinking in Higher Education

The ECD methodology, as noted by the report’s author, can be implemented for a wide range of constructs (critical thinking, problem solving, creativity, communication, different types of functional literacy, and others.), while remaining age-independent. As an example, the report looks at research approaches and Russian practice in relation to such a Universal Competence approach to critical thinking.

The concept of critical thinking is not easy to describe. A peculiarity of its research is the absence of a conventional theoretical framework. As a working definition, the report presents the following: critical thinking is a set of knowledge, skills and dispositions to rationally analyse and evaluate information for reasoned decision-making.

In 2015-2018, an international group of researchers conducted the ‘Supertest’ study (Study of Undergraduate Performance), aimed at measuring the critical thinking skills of engineering students in Russia, China, India, and the USA. One of the findings of the study was the alarming level of development of critical thinking skills among future engineers. It turned out that Russia, China, and India lag behind the US in developing this skill.

In Russia, as the author notes, there are practically no psychometrically verified or qualitative tools of critical thinking assessment for any level of education. This problem is currently being addressed by a team from the HSE Institute of Education, which is developing targeted research design, innovative approaches and integrative assessment models covering CT (critical thinking) and COR (critical online reasoning) skills.

The tool will measure critical thinking skills in an online environment — the student's ability to analyse beliefs, assumptions, and arguments, make causal connections, select logically correct and convincing arguments, find explanations, draw conclusions, and form their own position in solving problems in an online environment.

The content of the scenario tasks is not linked to a particular area of study — the tasks are the same for everyone. ‘The fact that some students, due to their background, may be familiar with a particular topic is compensated by the fact that all tasks are aimed at solving different problems (e.g., choosing a psychotherapist, protecting personal data, or assessing the statements of representatives of Soviet informal art),’ notes the author.

The test simulates a situation where the student is confronted with information from his or her everyday life. Thus critical thinking is assessed as a competence for real life activities. It is transferred within a single instrument to solve different tasks in terms of content and objectives.

Domestic Education Standard and Reality

The development and assessment of the universal competencies of university graduates is undoubtedly a trend that will develop and change the system of higher education. Despite the presence of cutting-edge UC development projects in individual universities, it is still too early to talk about a significant spread of such practices in Russian higher education, notes the author of the report. The main reason for this is the lack of tools for assessing the achievement of educational outcomes. At the same time, the vast majority of universities still continue to focus on transferring knowledge via traditional means, the report says.

Although UC are included in the FSES of higher education in Russia, the wording of many of them is not specific enough. In addition, modern methods of education quality assessment (EQA) are limited to accreditation, licensing, control, and supervision by the state. The sources of information on the level of UC development today are the results of individual research projects, as well as the opinions of employers and experts.

Valid and reliable tools for measuring student competences, meanwhile, are critical for improving educational outcomes. Without them, the development of human capital is hindered. Consequently, assessment tools in higher education should cover all educational outcomes, including universal competences.

IQ

This material is based on the report ‘An Assessment of Universal Competencies as Higher Education Learning Outcomes’ by a team from the HSE Institute of Education, including Elena Kardanova, Pavel Sorokin, Ekaterina Orel, Taras Pashchenko, Yury .N. Koreshnikov, et al.